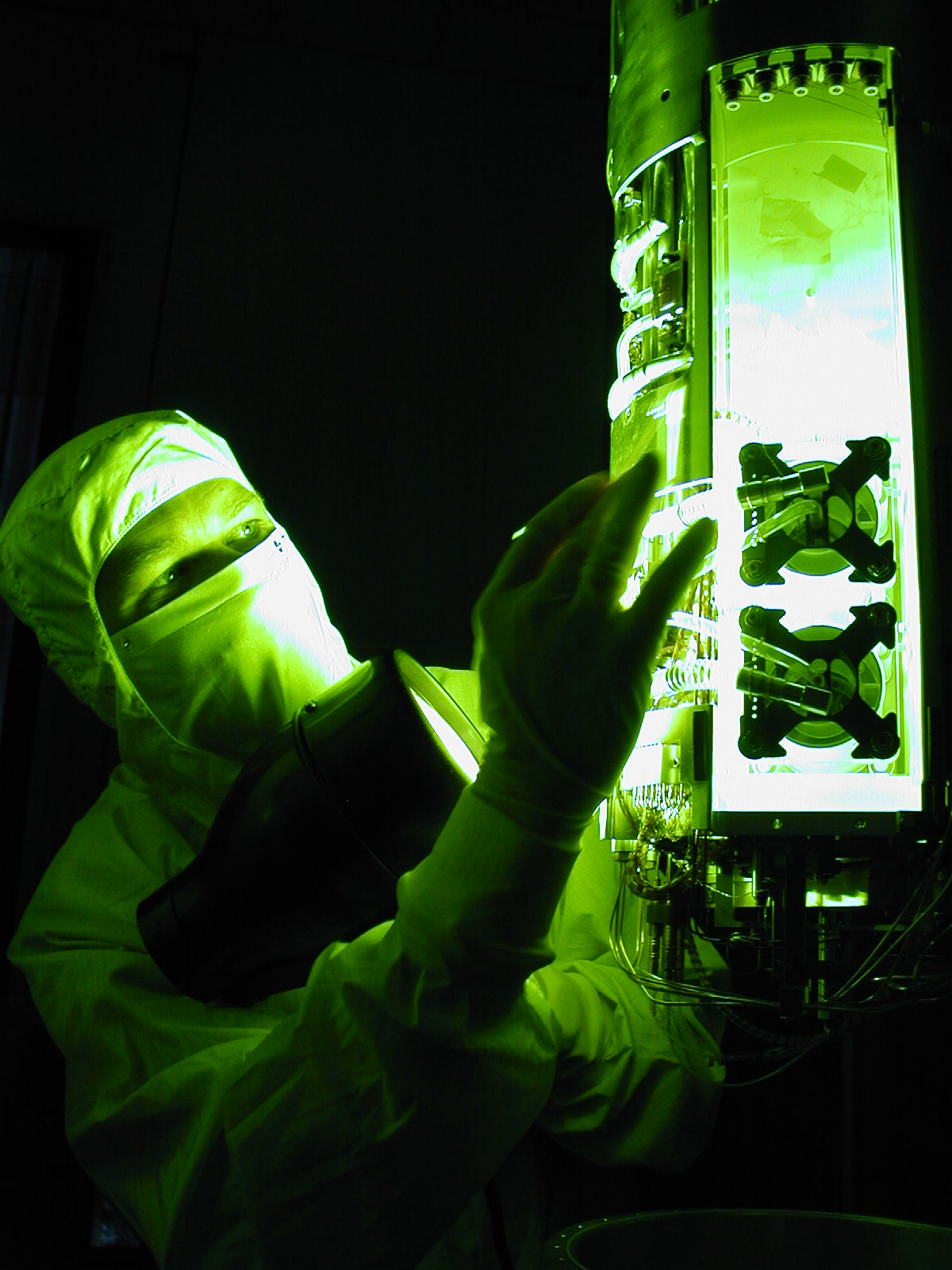

In Firing Room 1 at Kennedy Space Center, shuttle launch team members put the shuttle system through an End-to-End (ETE) Mission Management Team launch simulation. The ETE transitioned to the Johnson Space Center (JSC) for the flight portion of the simulation, with the STS-114 crew in a simulator at JSC. Such simulations are common before a launch to keep the shuttle launch team sharp and ready for liftoff. Photo Credit: Kennedy Space Center

By David G. Rogers

It’s been more than twelve years since I flew planes on and off aircraft carriers. One flight in particular literally changed my life. I was the aircraft commander and was flying with my squadron’s executive officer, who was two pay grades above me but had limited experience flying this particular aircraft and landing on ships. To maintain proficiency requirements, he was to get us aboard that day. During the approach, he got low and did not respond to the landing signal officer’s call for power. Then he got caught in a downdraft and got really low. The landing signal officer screamed for power then called to wave off the approach. When my XO was slow to respond, I was forced to take control of the aircraft, execute the wave-off, and get us aboard.

In Firing Room 1 at Kennedy Space Center, shuttle launch team members put the shuttle system through an End-to-End (ETE) Mission Management Team launch simulation. The ETE transitioned to the Johnson Space Center (JSC) for the flight portion of the simulation, with the STS-114 crew in a simulator at JSC. Such simulations are common before a launch to keep the shuttle launch team sharp and ready for liftoff.

Photo Credit: Kennedy Space Center

After looking at the landing video, I was shocked to learn that we were dangerously close to crashing into the back of the ship. In the thirty minutes that followed our landing, I demanded a crew debrief that included a brutally honest self-assessment of my performance. I asked myself, “What did I do that led to the ‘success’ of the mission?” Then I asked what I did that contributed to nearly losing the lives of my fellow crewmembers and myself in the process. What I did right was take control when it was required. What I did wrong was wait as long as I did to take action. Although the XO was more senior and we got along very well, I did not assess his skill level appropriately. I put too much stock in his pay grade and position and forgot to consider that his experience with this aircraft and flying aboard aircraft carriers was very limited. I should have been just as cautious as I was with a pilot fresh out of training. I learned that reflecting on problems and near misses is both an individual and a team responsibility, which can help build excellence in both. NASA has begun incorporating this best practice in some areas, but it could do more.

Understanding the Real Problem

Let’s suppose that an airline pilot fails to acquire an updated weather forecast at his destination. He is behind schedule, and his passengers will miss their connecting flights if he delays much longer, which will mean a financial penalty for the company. Approaching his destination, he notices that some other, smaller aircraft are diverting to fields with better weather. He sees the approaching storm ahead at the end of the runway but still elects to land. On his approach he encounters some dangerous wind conditions but manages to get the plane on the ground and taxis to the gate. The fact that the outcome was favorable, however, does not mean that the pilot made the correct decision.

Like the pilot, our NASA teams need to examine their experience rigorously in order to learn from it. Studies over the past twenty-five years have consistently reported that between 70 and 80 percent of accidents within high-risk and high-reliability organizations can be attributed to human performance errors. Challenger and Columbiarepresent the most severe, but certainly not all, of NASA’s human performance errors. The primary reason why we repeated the same mistakes is that we corrected some of the symptoms, but we did not effectively address the greatest contributing cause of our errors: our organizational culture.

Since human error cannot be completely eliminated, the trouble lies with a prevailing organizational culture that allows errors to go unchecked. Effective team skills and behaviors give us the tools necessary for avoiding mistakes, but our willingness and commitment to use those tools and continually sharpen them through constant evaluation and reevaluation is what has the largest impact on managing human error and developing exceptional teams. Without this, we as individuals, teams, and an organization are likely to slowly drift back to the same behaviors that created the culture that allowed critical errors to occur in the past. This is where we missed our opportunity after Challenger. We “fixed” many weaknesses and processes but never put in place a long-term cultural shift solution that continually improves how well we communicate and make decisions as a collective team.

A Case Where We’re Getting It Right

The Space Shuttle Program (SSP) Mission Management Team (MMT) is a recent example of a team that has embraced an attitude that has brought about a definite cultural change within the NASA team at large. As a result of the Columbia Accident Investigation Board’s recommendations, the MMT began an intensive training program that included initial and yearly certification requirements for all MMT members. For the most part, people were aware of the skills and behaviors required of them; what they lacked were ways to develop and sharpen them. After two and a half years of senior program management leadership, team training, self-study, and numerous MMT-specific simulations, the MMT that served during STS-114 represented a team far superior to what had been in place for more than nineteen years.

After STS-114, the SSP deputy manager began to look at ways to build upon the team’s marked improvement. He recognized that the team training the MMT was receiving could be enhanced if it used their own real-world examples to illustrate the training concepts. By tapping into NASA’s own internal resources and talents, key Johnson Space Center Safety and Mission Assurance (JSC S&MA) personnel were asked to refine the training to better meet the specific needs of the MMT membership. The MMT team training is now taught by JSC S&MA and uses shuttle MMT examples taken from MMT training simulations and past shuttle flights. This training restructuring also provided the opportunity to include more effective team debriefing and individual team member self-assessment skills.

A turning point occurred when the chair of the MMT established his expectations during an MMT debriefing. This wasn’t done by memo alone, but through mentorship and superior leadership—by modeling the behaviors he expected his team members to emulate. As a result of this action, MMT debriefs are consistently characterized as being brutally honest and open, with all egos put aside both from a team perspective and in each team member’s assessment of his or her own performance.

The impact of these measures has been profound. The shuttle MMT membership has shown a steadfast commitment to implementing continual improvement. They have adopted a learning organization mentality where every decision and team interaction, whether it occurs during a simulation or actual mission, represents opportunities to learn and improve both as a team and as individuals. They are especially sensitive to identifying areas where recent successes could lead to complacency. Dissenting opinions—viewed as alternative solutions among the team—are encouraged and actively sought.

Space Shuttle Mission Management Team members take notes during an eight-day simulation at Johnson Space Center in March 2005, preparing for Return-to-Flight mission

STS-114.

Photo Credit: NASA

Recently, the shuttle MMT has taken a fresh approach to lessons learned. Lessons learned databases capture the “historical record” of errors, but they rarely raise the level of awareness sufficiently to prevent the same problems from reoccurring. Team debriefs and self-assessments work better because they are continually reviewed and give team members a chance to take accountability and actively implement specific actions for improvement. In order to keep lessons from being forgotten, the MMT conducts a pre-brief prior to its next event to remind the team of previous lessons learned and develop improvement strategies in order to keep from “running over the same land mines.” This concept is not new to us. It is the very essence of a continual improvement process. What makes this different is that the MMT is making this model a living, breathing process for continual improvement.

Continually Learn from Past Effort

We are all stakeholders in effectively managing human error. To gain team expertise, it is essential—whether after a mission or a program milestone—to gather everyone together and evaluate the team’s performance and have each member articulate his or her self-assessment. This critical and often overlooked step is, in my opinion, what separates the team of experts from the expert team. Whether at the program, project, or functional level, these strategies apply to all team environments.

In order to effect a true cultural change, we must adopt a learning organization mind-set. We must never be satisfied with our current level of performance. We must always be asking ourselves, “How can we improve?” Expert teams recognize that they are only as sharp as their last decision. Achieving and sustaining a positive team culture and, in turn, organizational safety culture is not a discrete event but a journey. We must never let our guard down and allow ourselves to be fooled into believing that we have gotten as good as we can get.

In these past few years, I have been pleased to witness these behaviors spill over to other boards, panels, and meetings across NASA, such as the past three flight readiness reviews for STS-121, 115, and 116; recent Shuttle Program Requirements Control Board meetings; SSP System Integration Control Board meetings; and also at recent Flight Techniques Panel meetings. Many in the NASA family are committed to not only sustaining but continually improving our safety culture in the midst of the current dynamic and challenging environment. It personally gives me a great sense of pride to be part of an outstanding organization that has demonstrated the integrity and moral courage to commit itself to doing all that is humanly possible to truly learn from our past mistakes.