By Laurence Prusak

A number of years ago I was asked by some clients to come up with a rapid-fire indicator to determine whether a specific organization was really a “learning organization.” Now, I have always believed that all organizations learn things in some ways, even if what they learn does not correspond well to reality or provide them with any useful new knowledge. After thinking about the request for a bit, though, I decided the best indicator would be to ask employees, “Can you make a mistake around here?”

When people in various organizations tried this out in practice, asking groups of employees that key question, they were almost always given the same response: “Yes, you can make a mistake, but you will pay for it.” Some of these organizations were the very same ones that touted themselves as “learning organizations” in their annual reports and public-relations statements, but if they penalize their employees for making mistakes, not much learning will happen.

Why? Well, if you pay a substantial price for being wrong, you are rarely going to risk doing anything new and different because novel ideas and practices have a good chance of failing, at least at first. So you will stick with the tried and true, avoid mistakes, and learn very little. I think this condition is still endemic in most organizations, whatever they say about learning and encouraging innovative thinking. It is one of the strongest constraints I know of to innovation, as well as to learning anything at all from inevitable mistakes—one of the most powerful teachers there is. Some recent political memoirs by Tony Blair and George Bush also inadvertently communicate this same message by denying that any of their decisions were mistaken. If you think you have never made a mistake, there is no need to bother learning anything new.

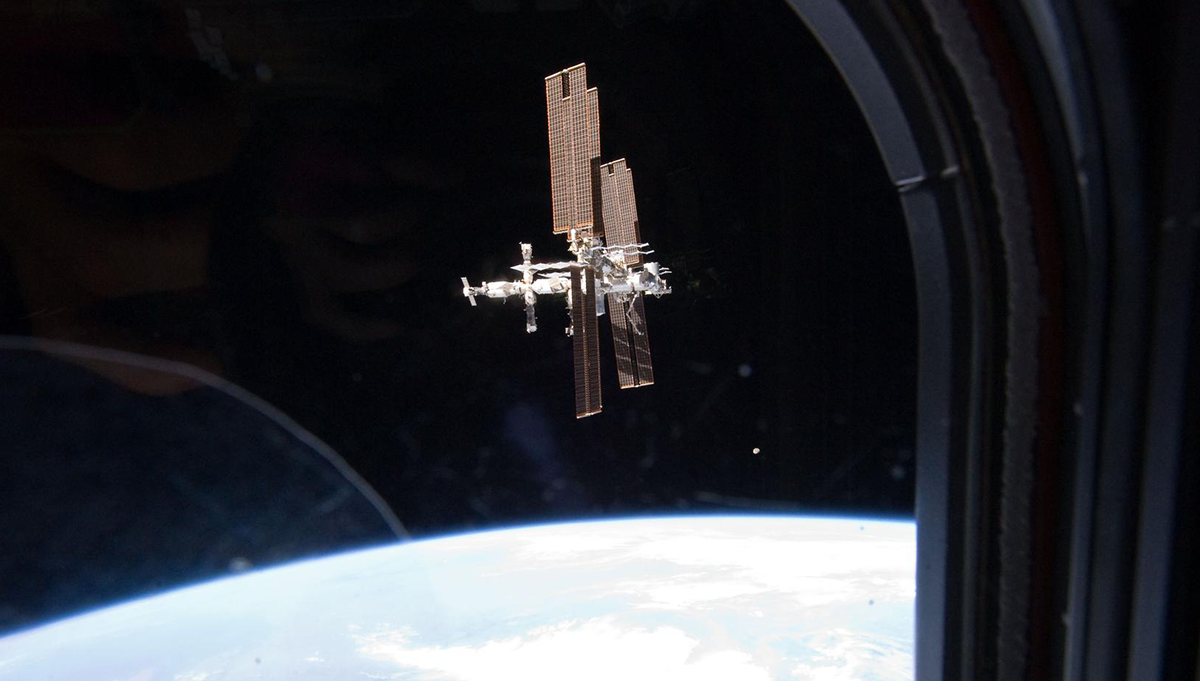

The early history of NASA is partly a history of making mistakes—some of them very costly—that helped develop the knowledge needed to land men on the moon and put rovers on Mars, among other triumphs. Some believe that NASA has become too mistake-averse over time and that an emphasis on avoiding mistakes limits the agency’s ability to innovate. (Take a look at the interview with Robert Braun in the summer 2010 issue of ASK, for instance.)

A recent book has a novel and appealing approach to this whole subject. Written by Kathryn Schultz, it is called Being Wrong. Ms. Schultz wants to establish an entirely new discipline called “wrongology” to study the causes, implications, and, most of all, the acceptance of being wrong. She presents a more populist version of the great book by Charles Perrow, Normal Accidents, but her take on the subject is more individually based and funnier.

What would happen if we all accepted that being wrong is as much a part of being human as being right, and especially that errors are essential to learning and knowledge creation? What would our values and institutions look like under this new dispensation? I can easily summon up the grave image of Alan Greenspan testifying before Congress last year on the causes of the financial crisis. What was so very startling was seeing him admit that he was wrong! It was such an unusual event that it made headlines around the world. But why should it be so rare and so startling? Greenspan had a hugely complex job, one where many critical variables are either poorly understood or not known at all. Nevertheless, neither he, nor any other federal director I have heard about, has ever said anything vaguely like what he did that day before our elected officials and the public.

… If you pay a substantial price for being wrong, you are rarely going to risk doing anything new and different because novel ideas and practices have a good chance of failing, at least at first.

Perhaps Ms. Schultz’s book will at the very least cause us to reflect a bit more on this oft-buried subject. It was a favorite theme of philosophers, when philosophers still wrote for the masses, and in literature. Being too proud to admit you are wrong—or to admit it only when it is too late—is central to several of Shakespeare’s plays. King Lear comes to mind. It is the subject of more novels than I can begin to list here. If you are interested, try tackling War and Peace (and I highly recommend it; it’s a great read), and you will see how Tolstoy deals with the subject of Napoleon’s colossal mistakes and (Tolstoy being the great writer he is) the stubborn mistakes of some Russian generals, too.

While Alan Greenspan is not often thought of as a heroic figure, he has the laudable distinction of being one of the very few people to say directly and clearly that he made a mistake. In doing so, he at least opened the door to the possibility of learning to do things differently—and better—in the future.

More Articles by Laurence Prusak

- The Knowledge NotebookBelieving in Science and Progress (ASK 39)

- The Knowledge NotebookOn Not Going It Alone: No Organization Is an Island (ASK 38)

- The Knowledge NotebookHow Organizations Learn Anything (ASK 37)

- The Knowledge NotebookSlow Learning (ASK 36)

- The Knowledge NotebookKnowledge and Judgment (ASK 35)

- + View More Articles