In 1999, the Wide-field Infrared Explorer (WIRE) lost its primary mission thirty-six hours after launch. Those who worked on WIRE, which was the fifth of the Explorer Program’s Small Explorer-class missions, thought they had done what they needed to achieve success.

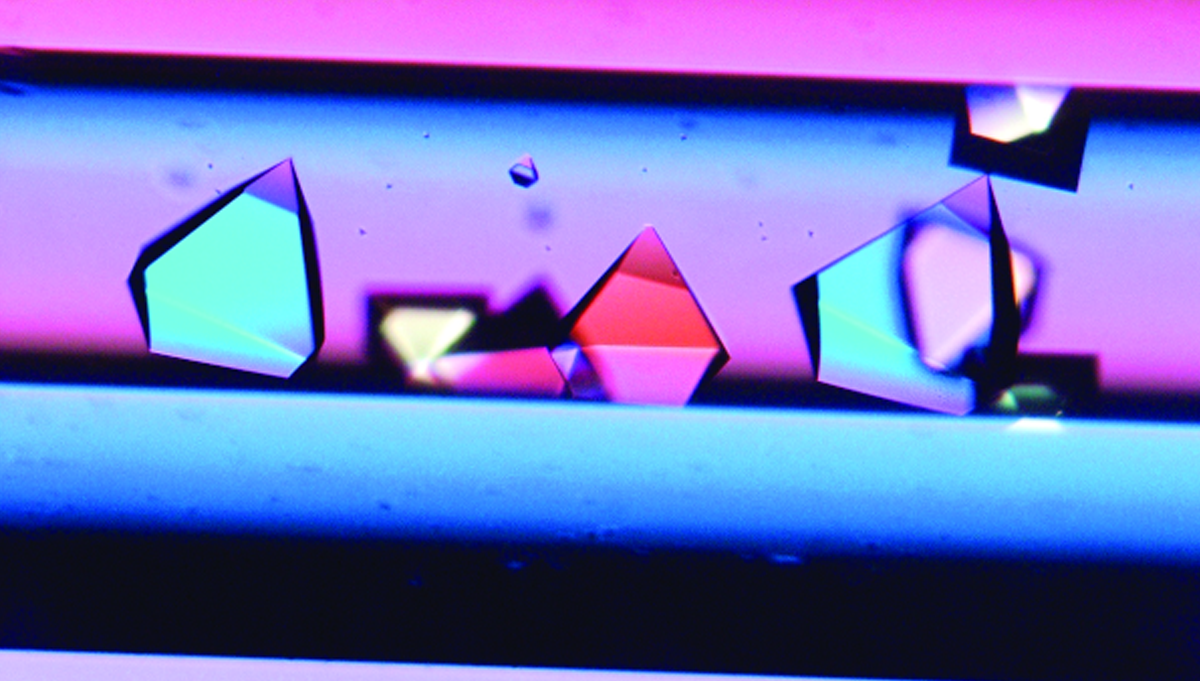

Closeup of the cryostat during hydrogen testing at Lockheed-Martin’s Santa Cruz facility in July 1997.

Photo Credit: NASA/Lockheed Martin

But a mishap investigation and a 2002 Government Accountability Office report on NASA’s lessons learned highlighted poor communication and incomplete testing as contributors to this and other NASA failures. The team’s informal motto, “insight, not oversight,” also helped WIRE’s issues stay hidden.

The motto was meant to respect the professionalism and expertise of each organization involved in the mission. WIRE had a complex organizational structure, with mission management at Goddard Space Flight Center, instrument development at the Jet Propulsion Laboratory (JPL), and instrument implementation at a contractor’s location with supervision by JPL. This arrangement was meant to capitalize on the strengths of each organization. By guiding team interactions with “insight, not oversight,” the goal was to avoid perceptions of distrust or micromanagement and facilitate a smooth working arrangement that could proceed without the hang-ups of too much oversight. This approach, however, had unintended consequences.

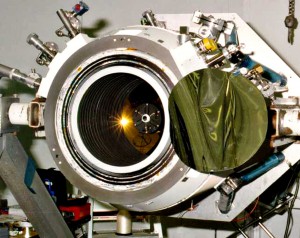

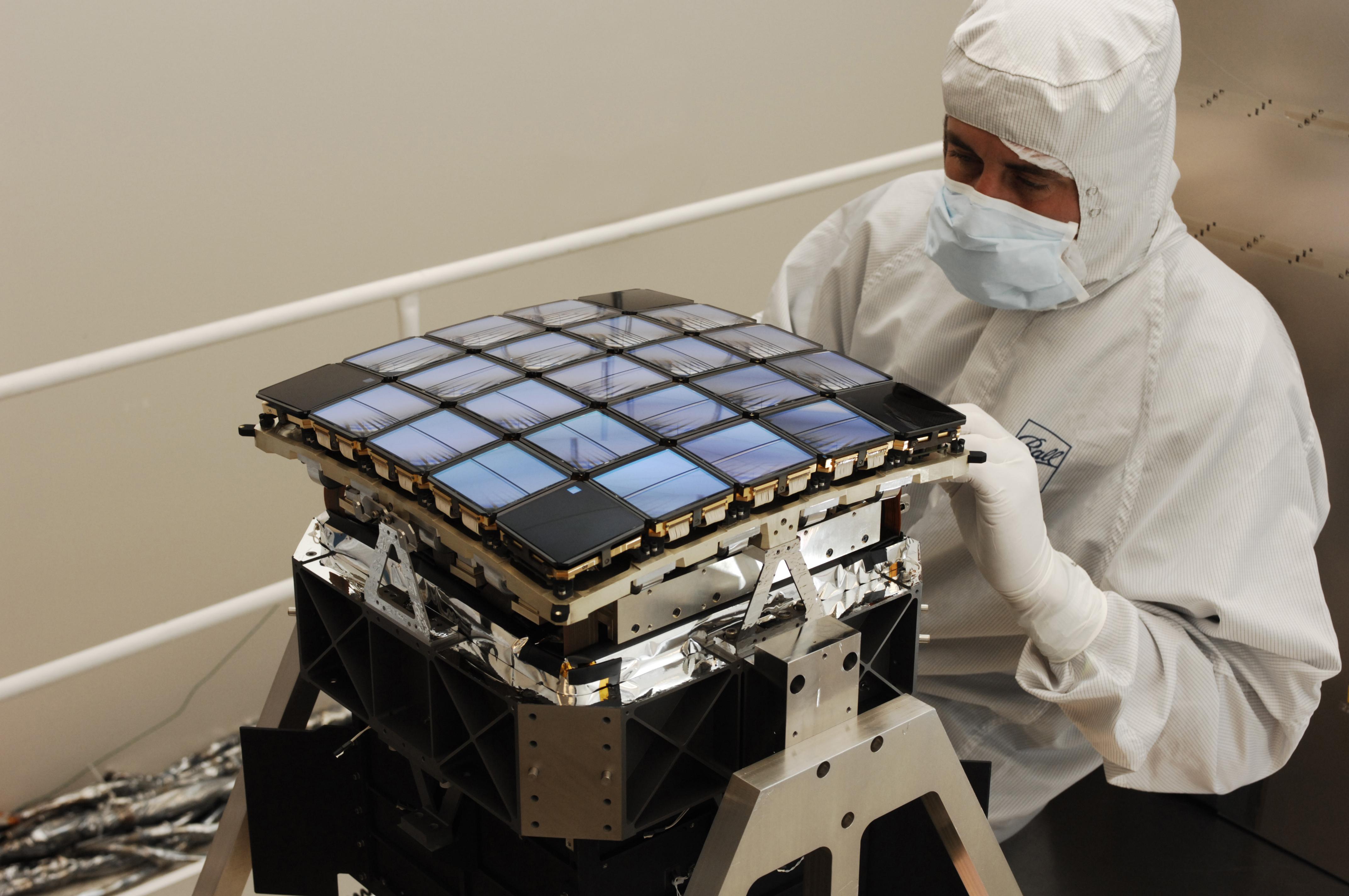

WIRE’s sensitive infrared telescope was the most visibly affected by the limited oversight. Meant to study how galaxies formed and evolved, the telescope’s infrared detectors required an extremely cold, 7 kelvin environment in order to operate with precision and without interference from the heat of the telescope itself. To achieve this, the telescope was protected inside a frozen-hydrogen-filled dewar, or cryostat. The plan was to keep the telescope safely covered inside the cryostat until WIRE made it into the deep cold of space. Then the cryostat cover would be ejected and the telescope would begin operations.

“We spent three years ensuring that cover would come off, and probably only a handful of hours making sure that it would stay on,” said Bryan Fafaul, who was the mission manager for WIRE.

Soon after launch—too soon—the cover ejected.

Communication Breakdown

During development, delivery of the pyro box that would eject—the cover had been delayed. As a result, the box wasn’t adequately included in a scheduled peer review of WIRE’s electronics. A change of management, and the failure to communicate to the new management that the peer review was inadequate, resulted in no additional review of the design.

We as engineers and scientists do a very good job addressing technical anomalies. We do a great job diagnosing the problem, making the appropriate corrections, and performing the necessary regression testing to ensure success,” said Fafaul. “Management anomalies are just as important but are more difficult to address. They take a long time to recognize, after effects are unclear, and regression testing is difficult. For WIRE, we had an issue: we weren’t communicating anymore. Ultimately, we had some personnel change out, and that made a significant difference in our communication. But the thing we didn’t know how to do was analyze what damage had been done as a result. We made a change, but we didn’t know how to go back and verify [regression test] what we caught and what we missed. We just didn’t know how to do that.”

The result was a chain reaction of miscommunication that led to a lack of insight.

Jim Watzin, who was the Small Explorer project manager at the time, described the communication difficulties as a matter of misconceived ownership and distrust of outside opinions. “These folks feared oversight and criticism and hid behind the organizational boundaries in order to ensure their privacy,” he wrote in response to a case study on the mission. “They lost the opportunity for thorough peer review (the first opportunity to catch the design defect) and in doing so they lost the entire mission.”

“Everyone was being told to back off and let the implementing organization do its thing with only minimal interference,” added Bill Townsend, who was Goddard’s deputy director at the time, in his own response. “… This guidance was sometimes interpreted in a way that ignored many of the tenets of good management. Sometimes the interpretation of this was to do nothing … Secondly, WIRE had two NASA centers working on it, one [JPL] reporting to the other [Goddard]. Given that either center could have adequately done any of the jobs, professional courtesy dictated neither get in the way of the other. While this was a noble gesture, it did create considerable confusion as to who was in charge of what.”

As a result, the contractor was able to proceed with the pyro box development without the peer review oversight needed to ensure success. Crucial details about the box design were not complete, others had little documentation, some were included in notes but left off data sheets. No one had a complete view of all the circuitry involved in the pyro box, and an indication that something might be amiss wasn’t fully analyzed during integration testing.

Test as You Fly, Fly as You Test

One of the undocumented pieces of information was the startup characteristics of the pyro box—namely how long the instrument took to power up and the effects other current signals would have on the box’s field-programmable gate array (FPGA) during its startup. This detail was overlooked due to delays in the box’s design delivery that prevented it from being included in subsystem peer review and the mission system design review.

Without the cryostat’s protection, the infrared detectors would misinterpret the telescope’s own heat as signal noise, which effectively ended WIRE’s primary mission.

Testing of the pyro box was challenging because of the cryostat. “It was a hydrogen dewar. You cant just load it up with hydrogen and take it into any building and test it,” explained Fafaul. “So we had to adapt and make provisions to do things a little bit differently.”

Since the cryostat itself could not be tested with the actual pyro box while filled with frozen hydrogen—otherwise known as being in its nominal, or ideal, state—the team used a pyrotechnic test unit to simulate the pyro event. The test unit had been successfully used in testing for previous Small Explorer-class missions, and was well known for being a bit finicky about false triggers. This knowledge, and a contractor’s documented explanation of a similar event, would be the foundation for dismissing a valid early-trigger event that made itself evident during spacecraft testing.

Before WIRE launched, the pyro box on the cryostat had been powered off for nearly two weeks, allowing any residual charge in the circuitry to bleed off. Residual charge turned out to be the key to maintaining a valid test configuration for the pyro box during spacecraft testing, which was occurring almost daily. When the team sent a signal to power up the system after launch, the pyro box powered on in an indeterminate state and the spacecraft immediately fired all pyro devices. The cryostat cover blew off, exposing the frozen hydrogen to the heat of the sun. It boiled off violently, sending the spacecraft into a 60-rpm spin. Without the cryostat’s protection, the infrared detectors would misinterpret the telescopes own heat as signal noise, which effectively ended WIRE’s primary mission.

Taking Tough Lessons to Heart

“For every shortcoming we had on WIRE, you’ll find nearly an identical shortcoming in every successful mission. Like it or not, you’re close to failure all the time,” said Fafaul.

“I’ve had seven or eight different offices since my WIRE days, and directly across from my desk you will always find my picture of WIRE,” he continued. “There are important lessons there that I want to be reminded of every day as I move through life.”

Among the tough lessons learned during WIRE, Fafaul took six especially to heart:

- Test and re-test to ensure proper application of FPGAs.

- Peer reviews are a vital part of mission design and development.

- Effective closed-loop tracking of actions helps keep everyone informed of progress or delays.

- Managing across organizational boundaries is always challenging. Don’t let respect for partnering institutions prevent insight.

- Extra vigilance is required when deviating from full-system, end-to-end testing.

- System design must consider both nominal and off-nominal scenarios and must take the time to understand and communicate anything that doesn’t look right.

“I remind everybody constantly that we are all systems engineers,” explained Fafaul. “I expect everybody, down to the administrative staff, to say something if they see or hear anything that doesn’t seem right. Remember, you need to be a team to be an A team.”

Despite the loss of its primary mission, the team managed to recover WIRE from its high-speed spin and a scientist developed a very successful secondary mission using the spacecrafts star tracker. WIRE began to study the oscillations in stars, releasing data that led to new scientific discoveries. WIRE continued to operate until the summer of 2011, when it returned to Earth.

More Articles by Kerry Ellis

- International Life Support (ASK 44)

- Permission to Stare-and Learn (ASK 42)

- X-15: Pushing the Envelope (ASK 40)

- Engineers Without Borders (ASK 39)

- NextGen: Preparing for More Crowded Skies (ASK 38)